The gap between companies burning cash on stalled AI projects and those shipping production agents comes down to three decisions made in the first two weeks.

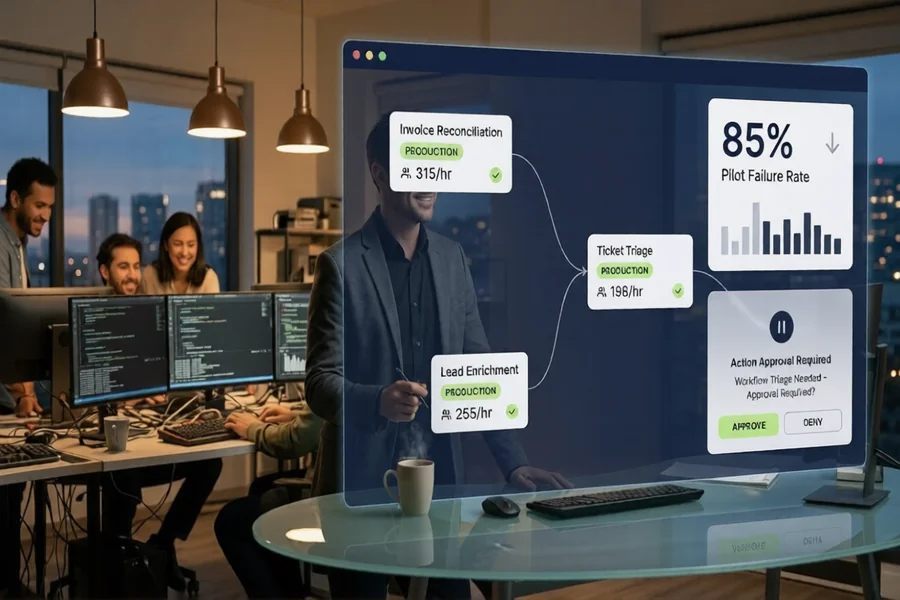

Gartner published a number last summer that should have triggered more emergency meetings than it did. By 2027, over 40% of agentic AI projects will be cancelled, citing escalating costs, unclear business value, and inadequate risk controls. Pair that with McKinsey’s finding that roughly 80% of enterprises using generative AI report no material impact on earnings, and Grant Thornton’s survey showing only 15% of mid-market firms have moved AI agents into production, and you get a pattern that’s hard to ignore.

Something is breaking between the pilot phase and the production phase. And it isn’t the technology.

Most post-mortems blame the models, the vendors, or the integration work. Having sat in on enough of these reviews, I can tell you the real cause is almost always decided before a single line of code is written. It’s in the workflow selection. It’s in the readiness assessment that never happened. It’s in the guardrails that got treated as a phase-two problem.

Here’s the part nobody mentions. The 15% that succeed aren’t smarter or better funded. They just refuse to skip three specific steps.

The workflow selection problem

Walk into any stalled AI agent pilot and you’ll usually find a team that picked the workflow with the most executive interest rather than the highest ROI. These are not the same thing.

A Fortune 500 operations VP recently told me his company spent nine months trying to automate a customer onboarding flow that involved fourteen stakeholders, six compliance checkpoints, and three legacy systems with no clean APIs. The executive sponsor loved the idea. The agent couldn’t ship. They eventually pivoted to automating internal IT ticket triage, a workflow nobody got excited about in meetings, and hit measurable ROI in six weeks.

The pattern repeats across industries. Teams pick workflows based on visibility, not viability. They automate what sounds impressive in a board deck instead of what has clean data, bounded decision logic, and a clear success metric.

The 15% do the opposite. They scan their operations for workflows that are repetitive, rule-bound, and have deterministic outcomes. Invoice reconciliation. Lead enrichment. First-line technical support triage. Internal document search and summarization. Boring workflows with fast payback.

Why readiness assessments aren’t optional anymore

This is where it gets interesting. The companies that succeed don’t just guess which workflows to automate. They run a structured readiness assessment first.

Before deploying anything, run a free AI readiness assessment for your company to identify which workflows have the highest ROI. A proper assessment looks at four dimensions: data quality and accessibility, workflow stability over the last 12 months, the integration surface area, and whether a human-in-the-loop checkpoint exists or needs to be built.

Teams that skip this step tend to discover halfway through a build that the “simple” workflow they picked actually has fourteen exception paths, three of which require judgment calls that regulators care about. By then, they’ve burned two quarters of budget.

Deloitte’s research on AI transformation found that companies which ran formal readiness assessments before deployment were three times more likely to reach production than those who jumped straight into pilots. The assessment itself rarely takes more than two weeks. The cost of not running it is usually measured in quarters.

The build-versus-buy trap that keeps eating budgets

Once you’ve picked the right workflow, the next fork in the road is whether to self-host an open-source agent framework or deploy on a managed platform. This is where a lot of the 85% quietly collapse.

Self-hosting something like OpenClaw on a DigitalOcean droplet or a bare VPS looks attractive on paper. The framework is free. The hardware is cheap. The engineering team wants the control. What doesn’t show up in the initial estimate is the actual operating cost.

Credential management alone eats weeks. Someone has to build a secrets broker, rotate API keys, handle OAuth refresh tokens, and make sure none of it leaks into agent memory. Then there’s context management. OpenClaw by default burns through tokens on housekeeping chatter between tool calls, and teams routinely report bills three to five times higher than their initial projections. Then there’s the skills marketplace problem, where an unverified plugin can exfiltrate data or run arbitrary code inside your sandbox.

The companies in the 15% overwhelmingly use managed AI agent platform solutions rather than self-hosting open source frameworks. Not because self-hosting is impossible. Because the total cost of ownership, once you account for the platform engineer you have to hire and the security incidents you have to respond to, makes managed platforms cheaper within the first six months.

A mid-market logistics company I spoke with recently priced out their self-hosted OpenClaw deployment against managed alternatives. The self-hosted option penciled in at roughly $18,000 a year for infrastructure, plus a 0.4 FTE engineer for maintenance. A managed platform at $29 per agent per month for their five-agent deployment came in at under $1,800 a year with zero platform engineering overhead. They switched in a weekend.

Governance isn’t paperwork. It’s the kill switch.

Stay with me here, because this is where most pilots fail spectacularly in ways that end up in incident reports.

An AI agent with tool access and no governance is a production incident waiting to happen. The ClawHavoc exploit earlier this year, where over 30,000 exposed OpenClaw instances leaked API credentials through memory persistence, should have been a wake-up call for the industry. In practice, it mostly just generated LinkedIn posts.

The 15% treat governance as a day-one requirement, not a compliance afterthought. They require action approval for anything touching money, customer data, or external systems. They implement rate limits at the agent level, not just the API level. They build audit logs that a regulator can actually read. And critically, they have a kill switch that works.

Built-in governance features like action approval and kill switch are what separate production deployments from science projects. If your agent can wire money, send emails to customers, or modify production databases, and you don’t have a one-click way to pause it across every session in progress, you are one prompt injection away from a headline.

This is also where framework alternatives like Hermes, managed platforms like xCloud or BetterClaw, and even hybrid approaches where teams self-host but add a commercial governance layer, start to diverge. The platforms that bake governance into the runtime tend to reach production faster than the ones that treat it as a configurable add-on.

The two-to-three workflow rule

Here’s a counterintuitive finding. The companies that succeed don’t try to automate everything at once. They pick two or three workflows, ship them to production, measure the ROI for a quarter, and only then expand.

This sounds obvious. It is apparently very hard to follow.

The failure mode looks like this. An AI steering committee gets convened. Every department nominates workflows. A consultant produces a beautiful prioritization matrix with fourteen candidates. The engineering team tries to build a platform that can handle all fourteen. Eighteen months later, none of them are in production and the steering committee has quietly stopped meeting.

The 15% pick three workflows, maximum. They ship the first one in six to eight weeks. They instrument it obsessively. They measure dollars saved or revenue generated, not vanity metrics like “queries handled.” Only after that first workflow is stable and profitable do they start on the second.

Gartner’s analysis suggests that companies with fewer than four production agents in year one have a significantly higher probability of reaching twenty-plus agents by year three than companies who attempted ten or more in year one. Scope discipline compounds.

What the 15% actually look like in practice

If you strip away the vendor pitches and the consulting frameworks, the companies getting value from AI agents share a strikingly similar playbook.

They start with a readiness assessment that takes two weeks and produces a ranked list of workflows with realistic ROI estimates. They pick two or three workflows with clean data, bounded logic, and fast feedback loops. They deploy on managed infrastructure with built-in governance rather than assembling their own stack from open-source parts. They build human-in-the-loop checkpoints before they need them, not after an incident forces the issue. And they measure success in actual business metrics over a full quarter before expanding scope.

None of this is novel. None of it requires a research breakthrough. It’s project discipline applied to a new category of tooling.

The 85% that fail aren’t victims of immature technology. They’re victims of a rollout pattern that would have failed with any technology. Skipping the assessment, picking workflows for political reasons, underestimating operational costs, and treating governance as paperwork are mistakes that predate AI and will outlast it.

The companies pulling ahead right now are the ones treating agent deployment like any other production system. Boring. Measured. Instrumented. Reversible.

That’s the real gap. Not capability. Not budget. Just the willingness to do the unsexy work before the first agent ships.